By Sarah Yáñez-Richards |

New York (EFE).- Chatbots with artificial intelligence (AI) can generate answers similar to those that a human being could give on any subject in a matter of seconds.

The most popular at the moment are Google’s Bard, Microsoft’s Bing, and OpenAI’s ChatGPT.

EFE compares the three models with a variety of questions, riddles and requests to see the difference between their answers.

For this experiment, EFE uses GPT-4, from OpenAI, which can be accessed by paying a subscription of $20 per month -OpenAI offers free services, such as ChatGPT, but it is an inferior technology and the chatbot only has access to the internet until 2021- .

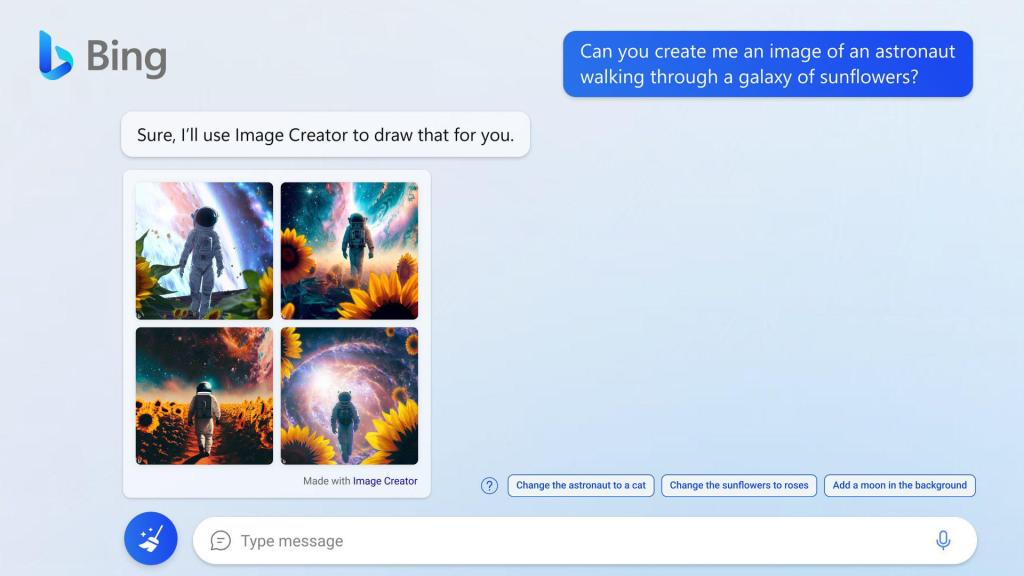

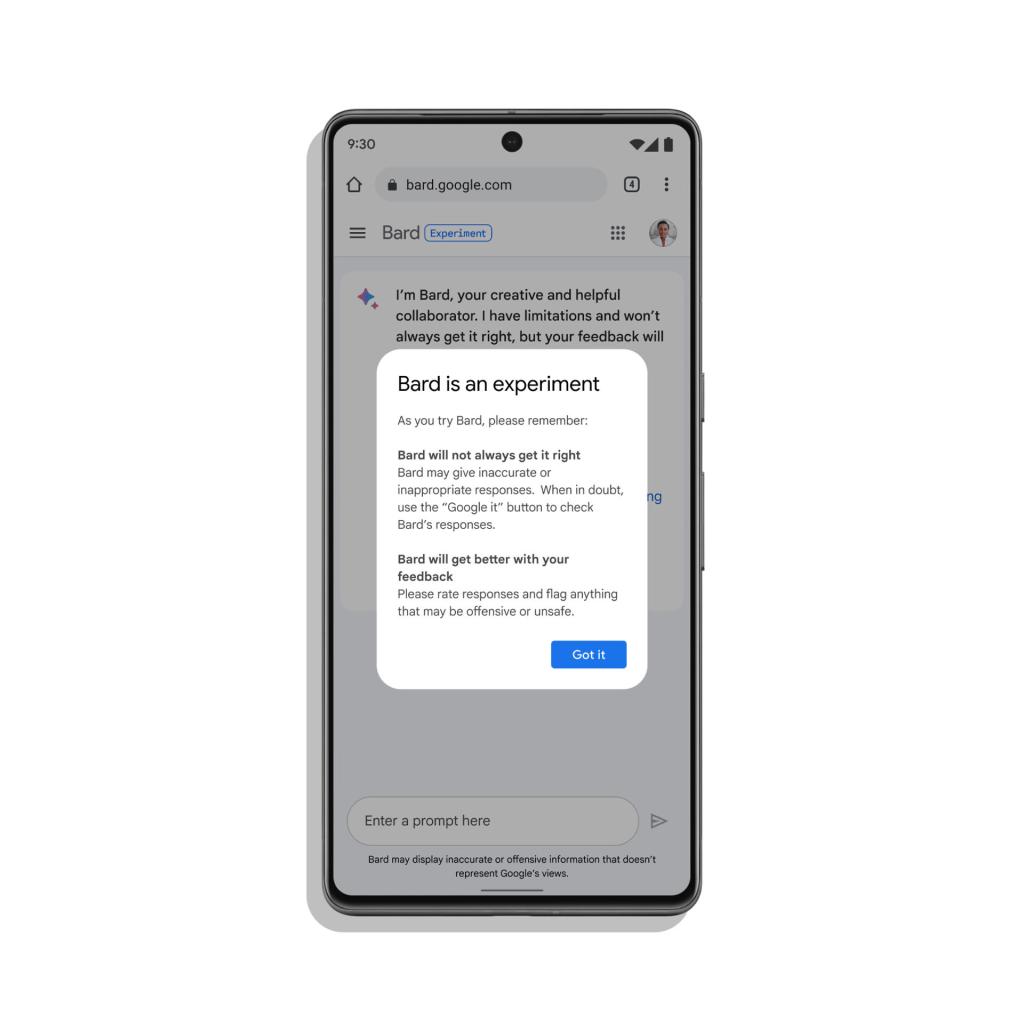

It also uses Microsoft’s Bing, powered by OpenAI’s GPT-4 technology, and Google’s first experimental version, Bard, which only a select group of people have access to.

Chatbot: “I won’t always get it right”

All three tools have messages warning that your answers may be wrong.

“I have limitations and I will not always do well,” says the Google service.

GPT-4 also stresses that its chatbot “is not intended to give advice.”

While Bing’s message says that “surprises and errors are possible.” “Be sure to check the facts and share your feedback so we can learn and improve!” he adds.

Not all chatbots speak Spanish

To the question: “Can I ask you things in Spanish?”, Bing and ChatGPT answer: “Yes”.

While Bard replies in English that he could not “provide assistance with that” as he is “capable of understanding and responding to only a subset of languages at this time,” implying that Spanish is not one of those languages.

Meanwhile, the questions and answers of this experiment will be in Spanish, in the case of Bing and ChatGPT, and in English, in the case of Bard.

A recipe

“Give me a cheap vegetarian recipe,” asks EFE. GPT-4 recommends “vegetarian lentils”, Bing “lentil rice with vegetables” and Bard “tofu scramble”.

The three chatbots followed the same system, first listing the ingredients and then giving the preparation instructions.

Both GPT-4 and Bard went a step further and gave additional information to the recipe itself.

“You can adapt it to your preferences by adding more vegetables, spices or even adding spinach or kale at the end of cooking to increase its nutrient content,” he comments at the end of his GPT-4 message.

While Bard emphasizes at the end of his message that his recipe is “a good source of protein and fiber.”

Where does the information come from?

Microsoft and Google have their own search engines and it benefits them to redirect users to other websites.

At the end of each Bard answer there is a button that says: “Google it”, while on Bing there is a tag that says “learn more”, where he gives a list of links.

In this case of the recipe, the Microsoft tool provides links to: recetasderechupete.com, tendencias.com, kiwilimon.com and clara.es.

For its part, OpenAI does not give any external link or option to know the source of the information.

An exam of Spanish literature and culture

The following test is a question on an Advanced Placement (AP) Spanish Literature and Culture exam—exams that American high school students can take to earn college credit.

The three chatbots are given a fragment of a text and asked to identify the author, as well as to explain “the development of the theme of the relationship between time and space within the work to which it belongs.”

Students are recommended to spend 15 minutes responding to this answer, but the chatbots give their reply in less than a minute.

According to the philologist, professor of Spanish and social studies in the US, Ana García Alonso, the only answer that she would approve is Bard’s, but since this was in English and not in Spanish, she would have to fail it as well.

In its 355-word response in English, the Google tool recognizes that the “My Wizard Horse” excerpt was written by Sabine R. Ulibarrí and then analyzes the text into several paragraphs.

“It’s very good, but it lacks reading sensibility, it doesn’t capture the mechanisms that create beauty, art and literary sensibility”, notes García Alonso.

Bing’s answer is the shortest, at 123 words. This chat also identifies the author, says the text “is considered one of the most important works of Chicano literature,” and explains the rest in one paragraph.

“It is very concise and lacks analysis”, notes the philologist, who adds that she would not approve the exercise of this chat.

OpenAI gave the longest answer, 360 words, but it was the worst answer, according to García Alonso.

Despite the fact that the question indicates that the text is titled “My magician horse”, GPT-4 answers that the fragment belongs to the short story “El Sur” by the Argentine author Jorge Luis Borges.

riddles

“What day of the year do charlatans speak the least?” EFE asks the AI chats, without specifying that it is a riddle.

Bing is the only one who solves the riddle: “It is that day in which the time is advanced in spring.”

While Bard and Chat-4 say that there is no specific day and offer additional information on charlatans.

Chatbots write the conclusions

In the final test, EFE asks the chats to write the last paragraph (maximum 40 words) for this article, but all the answers were longer, so they have been edited.

Bing responds: “Ultimately, the success of each company will depend on its ability to innovate and adapt as the field of AI evolves.”

Bard notes: “In conclusion, OpenAI’s ChatGPT is known for its ability to generate creative and engaging text, while Microsoft’s 365 Copilot – of which Bing is a part – is designed to be more useful and productive. Google’s Bard is still in development, but has the potential to be the most comprehensive and informative AI answer of all.”

“In conclusion, to get the most out of these tools, it is essential to understand their particularities and select the most appropriate one according to the specific needs of the user”, concludes GPT-4.